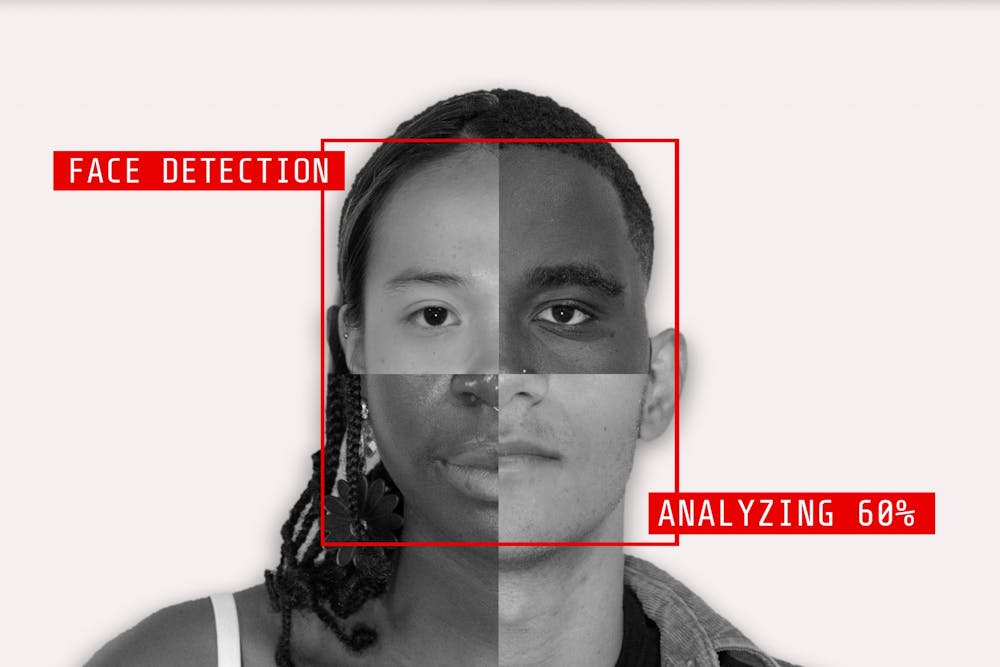

The fear that robots are taking over the world might not be that far-fetched.

While artificial intelligence is praised for its responsiveness to expansive amounts of information, AI seems to be absorbing biases, too. An experiment published in June demonstrated that robots trained with artificial intelligence exhibited racism and sexism in their decision-making.

While sorting through billion of images, robots in this experiment routinely categorized Black men as "criminals." Similarly, the label "homemaker" was given to women more regularly than it was given to men.

In programmers' efforts to create a machine that could eliminate human error, they have failed.

AI is made by humans and deployed into existing systems and institutions – none of which are immune to sexist and racist practices throughout history. Without studies like this one, such ethical concerns could have been missed entirely.

This technology is already so ingrained into our lives, in ways that seem to have relatively low stakes. AI can be used to restock grocery shelves, create online shopping algorithms or even play chess.

Systemic issues emerge when AI systems are encrypted into more than simply shelf-stocking robots.

AI tools are used to screen potential tenants or job candidates, relying on criminal histories and evictions to make their choices. These screening process tools reflect longstanding racial disparities in the criminal and legal system, which greatly affect members of marginalized communities that these systems are consistently discriminatory towards.

And while this could only be a setback in the development of AI, it has the potential to become something more without exploration of this issue.